Businesses are adopting generative AI fast, but many still struggle to move from pilots to scaled value. Boston Consulting Group reports that 74% of companies “have yet to show tangible value” from AI, while only a small fraction have developed “cutting-edge” capabilities across functions. McKinsey & Company similarly finds organizations are early in disciplined rollouts; in a complementary survey, only 1% of executives describe their gen AI rollouts as “mature,” and fewer than one-third report following most of the adoption and scaling practices linked with EBIT impact.

This is the gap your proposed delivery model targets: not “Can we build an agent?” but “Can we implement an agentic workflow that reliably changes how work gets done—safely, measurably, and at scale?” That gap is increasingly described as socio-technical and operating-model-driven, not model-driven. BCG’s research attributes roughly 70% of AI implementation challenges to people and process issues (versus 20% technology/data and 10% algorithms).

Your combined framing is strong:

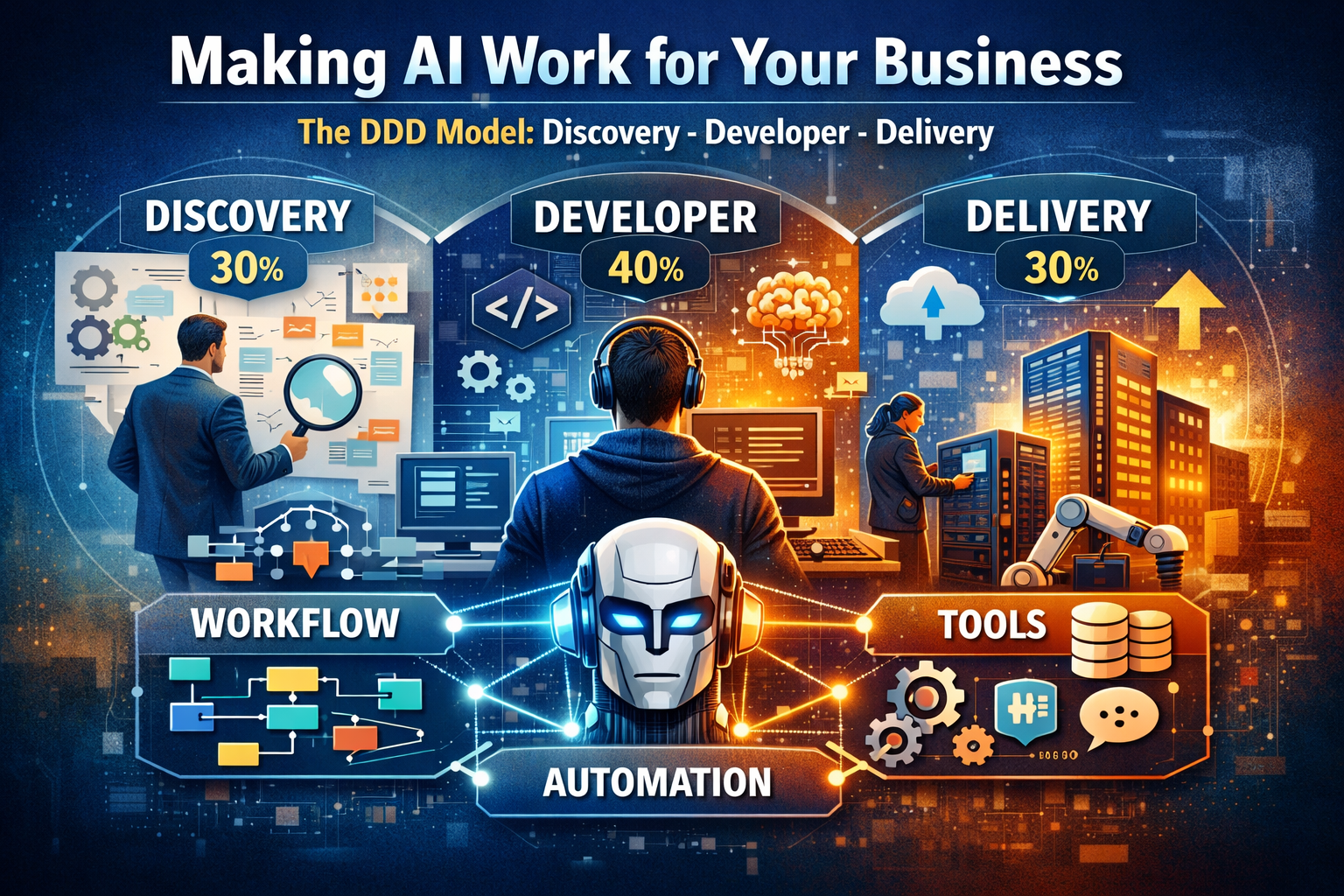

- WAT (Workflow, Automation, Tools) defines what an enterprise AI Agent actually is: a workflow, automated with orchestration, using tools (LLMs + enterprise systems) to complete work.

- DDD (Discovery, Developer, Delivery) defines how to implement WAT successfully: understand the real process and constraints, build the right automation and tool interactions, then operationalize it in production until it creates durable business outcomes.

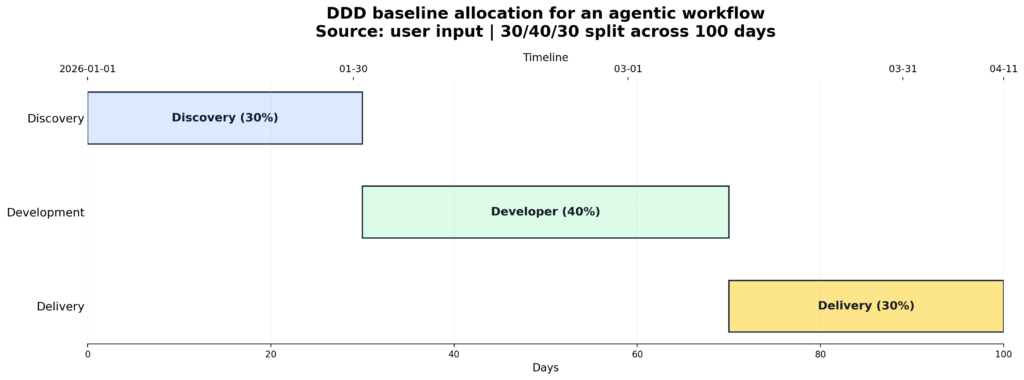

This report argues—in a practical, evidence-based way—that a baseline 30% Discovery / 40% Development / 30% Delivery allocation is a defensible “default split” for agentic workflows. It aligns with (1) risk governance practices emphasized by National Institute of Standards and Technology, where governance and context mapping are foundational and continuous; (2) adoption/scaling practices that correlate with financial impact; and (3) responsible AI and security guidance that spans design → develop → deploy → operate.

What follows includes: a recent literature review; comparative phase-split analysis; a detailed DDD implementation blueprint (activities, artifacts, roles, KPIs); recommended WAT architecture patterns and tooling; three case-backed examples with before/after metrics; and a practitioner toolkit (deployment checklist, governance/compliance mapping, cost/time estimation templates, adoption plan).

Literature review of AI implementation, agentic workflows, HITL, change management, and governance

Recent research and guidance converges on five themes that strongly support a delivery model like DDD.

Agentic workflows are not “chatbots with a UI”

Academic surveys describe LLM-based autonomous agents as systems that combine language models with modules for planning, memory, and actions (often via tools/APIs). This matters operationally: once an agent can take actions (create tickets, update ERP fields, send emails, trigger RPA bots), business risk and governance requirements expand materially compared with “read-only” Q&A.

Two influential technical lines of work explain why tool-using agents are becoming the default for enterprise automation:

- ReAct shows performance and trust gains when language models interleave reasoning with actions that query external sources, reducing hallucination/error propagation in knowledge-intensive tasks by grounding outputs in retrieved facts.

- Toolformer demonstrates language models can be trained to decide when to call tools and how to incorporate tool outputs, aiming to overcome basic limitations (e.g., arithmetic, factual lookup) using external APIs.

In business terms: agents work best when the workflow design deliberately decides what is deterministic (workflow rules, approvals, system-of-record updates) and what is probabilistic (classification, extraction, summarization, drafting), then constrains probabilistic steps within audited automation. This aligns closely with your WAT emphasis.

Human-in-the-loop is consistently recommended for higher-stakes deployments

Human-in-the-loop (HITL) is not one pattern but a family of patterns: approval gates, exception queues, feedback loops, escalation pathways, and post-hoc audits. A major review of human-in-the-loop machine learning emphasizes the breadth of “human involvement” concepts and argues success depends on designing the interactions and user experience around AI, not only the model.

Enterprise platform guidance increasingly expresses HITL as a standard control pattern. Microsoft explicitly recommends keeping a human in the loop, maintaining human decision-making, and ensuring real-time human intervention—especially to manage cases where the model does not perform as required.

The practical implication for delivery models: HITL is not “a feature you add later.” It is a design constraint that must be surfaced during Discovery (risk classification, decision rights), implemented during Development (approval workflow, audit logs), and validated during Delivery (operational readiness, user training, governance sign-off).

Change management and operating model readiness are first-order constraints

AI implementation is regularly framed as socio-technical change. A 2025 peer-reviewed retail study based on expert interviews explicitly analyzes organizational hurdles and proposes an “AI Implementation Compass” for change management across micro/meso/macro factors.

Industry research converges on the same conclusion at scale. BCG emphasizes that AI leaders “focus their efforts on people and processes over technology and algorithms” and attributes the majority of implementation challenges to people/process issues. This supports a delivery model that reserves meaningful budget not only for building, but also for the messy reality of operational adoption (training, incentives, redesigned SOPs, and cross-functional decision rights).

Governance is becoming lifecycle-based and auditable

NIST’s AI Risk Management Framework formalizes a lifecycle view: the Core is organized into GOVERN, MAP, MEASURE, MANAGE, with GOVERN described as cross-cutting across the other functions. In the MAP function, NIST explicitly calls out eliciting system requirements from relevant AI actors and defining/documenting processes for human oversight aligned with governance policies. NIST also frames trustworthy AI as multi-criteria (valid/reliable, safe, secure/resilient, accountable/transparent, explainable/interpretable, privacy-enhanced, fair with harmful bias managed) and stresses that context determines how these are balanced and measured.

NIST’s Generative AI Profile extends this to genAI specifically and positions profiles as ways to manage risks across stages of the AI lifecycle and common business processes (including use of LLMs and cloud services).

Complementing NIST, governance is also being codified via management-system standards. International Organization for Standardization describes ISO/IEC 42001 as an integrated approach to managing AI projects, from risk assessment to treatment of risks, aimed at responsible and effective use across diverse applications.

Responsible AI and security guidance is shifting toward practical engineering controls

Amazon Web Services frames responsible AI as deliberate design choices per use case and points teams to best-practice lenses across design, development, and operation—explicitly recognizing that moving from “idea to trusted production workload” requires balancing benefits and risks. AWS’s Responsible Use of AI Guide organizes recommendations across phases (design, develop, deploy, operate) and emphasizes programmatic objectives, leaders, metrics, and resourcing for responsible AI capabilities.

On security, OWASP’s Top 10 for LLM Applications identifies leading vulnerability categories such as prompt injection, insecure output handling, sensitive information disclosure, insecure plugin design, and supply chain vulnerabilities. This reinforces why “Delivery” must include monitoring, controls, incident response, and continuous improvement—not only deployment mechanics.

Summary table of the evidence base

| Theme | What the last 5 years of evidence emphasizes | Why it supports DDD + WAT |

|---|---|---|

| Scaling value | Most firms struggle to scale AI value; leaders focus transformation over pilots | Discovery and Delivery must be funded as real work, not overhead |

| Adoption practices | Tracking KPIs, roadmaps, and change story correlate with impact | Delivery must include adoption/measurement systems, not just go-live |

| Lifecycle governance | Cross-cutting governance + context mapping + ongoing risk management | Discovery formalizes context/requirements; Delivery sustains monitoring/controls |

| Agent architecture | Agents combine planning/memory/actions; tool use improves grounding | Development must design tools + orchestration—not just prompts |

| HITL and security | Human oversight and LLM security risks require engineered controls | HITL gates + secure tool access must be designed early and validated in prod |

Comparative analysis of phase splits and why the proposed allocation is robust

A phase split is ultimately a risk allocation strategy. In agentic workflows, the dominant failure modes are rarely “we couldn’t write the code.” They are more commonly:

- automating the wrong workflow (or the wrong variant of the workflow),

- missing decision rights and exception handling,

- deploying without trust, controls, or adoption readiness,

- failing to operationalize measurement, governance, and continuous improvement.

These align with industry findings that people/process factors dominate scaling challenges.

How DDD maps to governance and scaling practices

Your 30/40/30 baseline aligns cleanly with lifecycle recommendations:

- Discovery corresponds to NIST-style context mapping and requirements elicitation, including oversight design.

- Development builds the WAT system: orchestration + tool integration + controls, consistent with the agentic shift toward shared platforms for memory, orchestration, tool registries, and governance described in BCG’s multi-agent transformation guidance.

- Delivery implements adoption practices shown to correlate with financial impact (KPIs, roadmaps, feedback loops, change story), and operationalizes responsible AI across deploy + operate phases.

Alternative splits, when they happen, and typical risks

| Split pattern | Why teams choose it | Where it works | Common risks in agentic workflows | Risk symptoms you’ll see |

|---|---|---|---|---|

| 20 / 60 / 20 | “We just need to build fast” | Narrow, low-risk tools; internal copilots | Wrong workflow assumptions; missing oversight; production surprises | Rework spikes; approvals added late; trust issues slow rollout |

| 15 / 70 / 15 | Engineering-led pilots | Prototypes, demos | Adoption failure; governance gaps; “pilot loop” | Live users bypass system; silent non-use; no KPI linkage |

| 40 / 40 / 20 | High-stakes environments | Highly regulated or safety-critical | Analysis paralysis if too document-heavy | Slow value realization; stakeholder fatigue |

| 25 / 45 / 30 | Balanced but build-tilted | Many operational automations | Underfunded discovery for multi-variant processes | Exceptions overwhelm; workflow breaks at edge cases |

| 30 / 40 / 30 | Workflow + adoption centered | Most enterprise agentic workflows | Requires discipline to keep discovery outcome-driven | When done well: fewer surprises + faster adoption at scale |

Why 30/40/30 is a strong default: it explicitly funds the two areas industry evidence flags as hardest—process/people readiness and scaling practices—while still reserving the largest single share for engineering execution.

The DDD implementation blueprint for WAT-based AI Agents

The most practical way to implement your model is to treat each DDD phase as producing a decision-ready artifact set. In other words: Discovery is not meetings; it is the production of artifacts that allow Development to be point-accurate, and Delivery to be smooth.

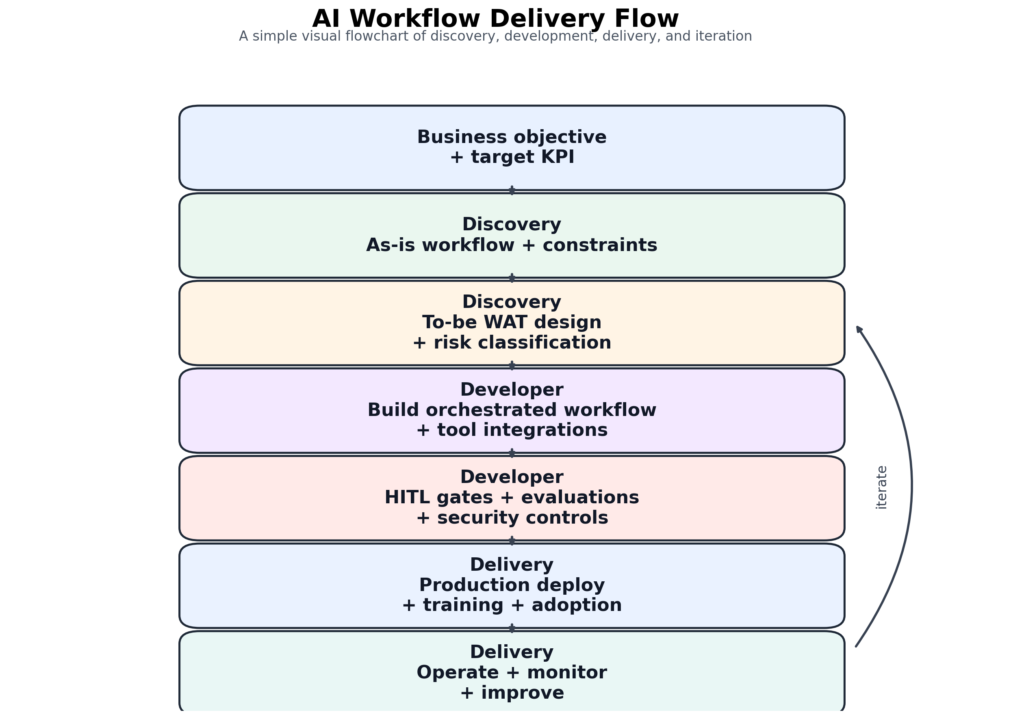

End-to-end DDD process flow

Phase-by-phase activities, deliverables, roles, and KPIs

The tables below are designed as a reusable “implementation playbook” you can attach to proposals.

Discovery phase deliverables and exit criteria

Discovery is where you earn the right to automate. NIST explicitly calls out eliciting requirements from relevant AI actors and designing human oversight processes. Microsoft’s Responsible AI Standard similarly requires impact assessment early (when defining vision/requirements), review/approvals before development starts, and updates at least annually or before advancing release stages.

| Workstream | Core activities | Artifacts produced | Primary roles | Phase KPIs / exit criteria |

|---|---|---|---|---|

| Process understanding | Process walk-throughs; shadowing; variant mapping; exception inventory | As-is process map (BPMN); exception catalog; pain-point heatmap | Process Owner, BA, SME | ≥80–90% of volume represented by mapped variants; exception coverage defined |

| Outcome & ROI | Define target KPIs; baseline measurement plan; benefits hypothesis | KPI tree + baseline plan; ROI model assumptions | Exec Sponsor, Product Owner, Finance Analyst | Baseline metrics collected; KPI definitions agreed |

| WAT design | Identify workflow boundaries; automation triggers; decision rights; tool inventory | To-be process map; WAT component spec; tool access matrix | AI Product Owner, Solution Architect | Signed-off to-be workflow; tool access design reviewed |

| Data & integration readiness | Data sources; systems-of-record; API feasibility; identity model | Data dictionary; integration architecture; environments inventory | Data Engineer, Integration Lead, Security | Data readiness score; integration feasibility validated |

| Risk & governance | Risk classification; HITL design; audit requirements | Risk register; HITL control plan; logging/audit requirements | Risk/Compliance, Security, Legal | Governance sign-off criteria defined; sensitive data handling approach agreed |

Developer phase deliverables and exit criteria

This is the engineering core: implementing WAT. Agent research shows tool use and structured interaction can improve performance and interpretability; the practical translation is tool-augmented and orchestrated automation rather than prompt-only systems. BCG’s agentic transformation guidance distinguishes between “agent platforms” and “agent products,” highlighting shared infrastructure needs for memory, orchestration, tool registries, and governance.

| Workstream | Core activities | Artifacts produced | Primary roles | Phase KPIs / exit criteria |

|---|---|---|---|---|

| Workflow orchestration | Implement deterministic workflow; retries; SLAs; exception queues | Orchestration definitions; state machine diagrams; run-time configs | Platform Engineer, Automation Lead | Workflow test pass rate; exception routes verified |

| LLM engineering | Prompt design; tool calling; grounding patterns; evaluation harness | Prompt library; tool schemas; evaluation dataset | LLM Engineer, QA Lead | Accuracy/quality thresholds met on representative test set |

| Retrieval & knowledge | Build RAG or knowledge services; provenance logging | Vector index pipeline; document ingestion specs | Data Engineer, Architect | Retrieval latency; citation/provenance coverage |

| HITL controls | Approval gates; dual control for high-risk actions; auditability | HITL UI/work queue; audit logs; escalation rules | Product Owner, Security, Ops Lead | HITL flows tested end-to-end; audit evidence demonstrable |

| Security engineering | Guardrails; allow-lists; secret management; threat modeling | Threat model; abuse cases; secure config baselines | Security Engineer, Architect | OWASP LLM risks addressed; pen-test issues triaged |

Delivery phase deliverables and exit criteria

Delivery is where AI becomes a business capability. McKinsey’s findings show adoption practices correlate with EBIT impact, especially tracking well-defined KPIs and establishing a clear adoption roadmap; yet most organizations do not implement these practices consistently. Responsible AI guidance also stresses ongoing operation and monitoring across the lifecycle.

| Workstream | Core activities | Artifacts produced | Primary roles | Phase KPIs / exit criteria |

|---|---|---|---|---|

| Production readiness | CI/CD; environment hardening; rollout strategy | Deployment pipeline; runbooks; rollback plan | DevOps/SRE, Architect | Successful staging → prod cutover; rollback tested |

| Monitoring & operations | Telemetry; cost monitoring; incident management | KPI dashboards; alerting; incident playbooks | SRE, Ops Manager | SLA met; incident response in place; cost within budget |

| Adoption & training | Role-based training; comms; change story; incentives | Training assets; SOP updates; comms plan | Change Lead, Business Champion | Active users; cycle-time improvements; training completion |

| Continuous improvement | Feedback loop; prompt/version governance; model updates | Change log; weekly improvement backlog | Product Owner, Ops, QA | KPI trend improvement; defect rate decreasing |

Timeline representation of the proposed baseline allocation

WAT tooling and architecture patterns that make agents safe and operable

A recurring enterprise lesson is that agentic solutions scale when they are engineered as workflow systems that use AI, not as AI demos that hope to become workflows. This also tracks AWS’s observation that ML differs from rule-based software and requires balancing AI-specific risks with standard engineering practices to reach trusted production.

Reference architecture patterns for WAT

Workflow (W): treat the business process as the “source of truth” (states, approvals, exceptions).

Automation (A): orchestrate transitions deterministically with event-driven controls and auditable logs.

Tools (T): give the agent constrained capabilities: LLM(s), retrieval, APIs, RPA actions, and analytics.

BCG explicitly describes the need for shared platform infrastructure in scalable agent deployments: memory, orchestration, tool registries, and governance.

Tooling categories and practical options

This table is written to be vendor-neutral, while referencing the controls implied by responsible AI and security sources.

| WAT component | Recommended pattern | Typical tooling options (examples) | Key operational KPI |

|---|---|---|---|

| Workflow | Workflow-first (state machine/BPMN); explicit exception lanes | Orchestrators (Temporal/Camunda/Zeebe), cloud workflows (Step Functions), BPMN engines | End-to-end cycle time; exception rate |

| Automation | Event-driven triggers; idempotent actions; retries; SLA timers | Message bus (Kafka), iPaaS connectors, RPA triggers | SLA breach rate; retry success rate |

| LLM layer | “Constrained agent”: tool calling + allow-lists + output validation | Model gateway, prompt registry, policy checks | Tool-call success rate; hallucination proxy rates |

| Retrieval | RAG with provenance and access control | Vector DBs (pgvector, Milvus, Pinecone, OpenSearch vectors), search engines | Retrieval precision/recall; latency |

| RPA | RPA only where APIs don’t exist; keep robots behind orchestration | RPA platforms; attended vs unattended bots | Robot failure rate; manual fallback volume |

| Security | Treat OWASP LLM Top 10 as baseline threat catalog | Prompt injection defenses; secret vault; egress controls | Security incidents; blocked malicious prompts |

| Monitoring | Full trace (inputs → tool calls → outputs); PII redaction | Observability (OpenTelemetry), LLM telemetry, cost monitors | Cost per transaction; incident MTTR |

Security risks should be explicitly designed for. OWASP’s Top 10 enumerates prompt injection and insecure output handling as leading risk categories—particularly relevant to agentic tool use.

Human oversight patterns that work in real operations

Microsoft’s guidance is direct: keep humans in decision-making and ensure real-time intervention capability. NIST similarly positions governance as cross-cutting and explicitly includes oversight process definition in mapping activities.

In practice, three HITL designs cover most business workflows:

- Pre-action approval: agent drafts a plan + tool calls; human approves before execution (ideal for financial postings, customer-impacting actions).

- Exception queue: agent runs straight-through for routine cases; routes uncertain or policy-violating cases to a work queue.

- Post-action audit: agent acts within bounded permissions; random sampling + anomaly triggers drive audits (more common in low-risk scenarios).

Case-based examples with before/after metrics

The following examples use publicly reported outcomes to ground the DDD + WAT approach. Vendor and self-reported case studies can be optimistic, but they provide directional evidence and concrete metrics for what “good” can look like when workflow + automation + tools are integrated with operational change.

Finance example: invoice and document processing in accounts payable

A common AP baseline includes manual data capture, invoice validation, exception handling, and ERP entry. This is a canonical agentic workflow because it mixes deterministic policies (approval thresholds, matching) with probabilistic tasks (document extraction, classification).

Public outcomes illustrate the value of WAT:

- UiPath reports that Thermo Fisher Scientific achieved a 70% reduction in invoice processing time, with ~53% of invoices handled without human involvement, reducing workload tied to ~824,000 invoices annually.

- Ramp reports saving 30K hours of manual work monthly and processing 5 million receipts per month using Azure AI document tooling to automate finance workflows.

- EY reports reduced manual workload of ingesting forms by up to 90%, reduced manual interventions by 80%, and accelerated model production 17x in a document intelligence program supporting complex tax workflows.

Before/after metric frame (relative):

| Metric | Before (typical manual-heavy AP) | After (case evidence range) |

|---|---|---|

| Processing time | Baseline = 100 | ~30 (70% reduction) |

| Straight-through processing | Often low for varied invoices | ~53% automated in one program |

| Manual ingestion workload | High, especially for multi-page forms | Up to 90% reduction reported |

DDD application:

Discovery maps invoice variants and exception policies; Development implements OCR/extraction + matching + HITL approvals; Delivery focuses on SOP changes, exception work queues, and KPI dashboards (cycle time, touchless rate, error rate).

Service operations example: contact center agent-assist and self-service

Contact centers show a common pattern: the same customer questions repeat, but resolution requires navigating multiple systems and knowledge bases; agents spend time searching, summarizing, and documenting.

An AWS case study reports that Fractal Analytics achieved 10–15% average reduction in call handling time, 30% call deflection, and served 200,000+ monthly queries through a generative AI knowledge assist solution.

Separately, IBM reports 70% of customer support inquiries resolved with a digital assistant, 26% improvement in time to resolution for complex issues, and a 25-point increase in customer satisfaction, alongside operational savings since 2022.

Before/after metric frame:

| Metric | Before | After (case evidence) |

|---|---|---|

| Average handle time | Baseline | 10–15% reduction |

| Self-service / deflection | Baseline | 30% deflection |

| Digital resolution rate | Baseline | 70% resolved via digital assistant |

DDD application:

Discovery focuses on top call drivers, knowledge quality, and what actions must remain human-approved; Development builds retrieval + tool calling into CRM/ticketing; Delivery trains agents, updates QA and compliance scripts, and instruments KPIs (AHT, deflection, resolution quality).

Construction example: field documentation and site visibility workflows

Field workflows are often “manual glue work”: capture photos, label them, place them in the right project/job context, and keep the rest of the stakeholders aligned.

An AWS case study for PlanRadar reports customers can document 500 m² of a construction site in ~5 minutes, representing a 90% reduction in time vs manual photography, with 97% positioning accuracy.

Because both “before” and “after” time are implied, you can translate this 90% reduction into a concrete time comparison (approximate): if 5 minutes is 10% of prior time, the prior time is ~50 minutes (inferred from the reported reduction).

Before/after metric frame:

| Metric | Before | After |

|---|---|---|

| Time to document 500 m² | ~50 minutes (inferred) | ~5 minutes |

| Accuracy of placement | Variable | 97% accuracy |

DDD application:

Discovery clarifies which documentation events trigger downstream actions (claims, progress billing, safety reporting); Development integrates capture + classification + auto-filing; Delivery operationalizes training, QA sampling, and governance for stored images and metadata.

Deployment checklist, governance mapping, estimation templates, and adoption plan

This section is designed to be copy-paste-ready into proposals and delivery playbooks.

Production deployment checklist for agentic workflows

This checklist integrates governance and security expectations described by NIST, AWS responsible AI lifecycle guidance, and OWASP LLM threat categories.

Pre–go-live controls

- Workflow definition is versioned and approved (states, exception routes, SLAs).

- Human oversight points are explicitly defined and tested end-to-end (approval and escalation paths).

- Impact/risk assessment is completed and approved before release (and scheduled for periodic review).

- Tool access is least-privilege and allow-listed; high-risk tool calls require approval (dual control).

- Data classification and PII handling are implemented (redaction, retention rules, access logs).

- Prompt-injection and insecure-output controls are validated against known abuse cases (OWASP categories).

- Evaluation baseline exists (representative cases, acceptance thresholds, failure budgets).

- Rollback and “kill switch” mechanisms are tested (ability to supersede/deactivate if outcomes diverge).

Go-live readiness

- Canary rollout plan exists (phased users/teams; monitored KPIs).

- On-call, incident runbooks, and escalation owners are defined.

- User training, updated SOPs, and support channels are live.

- KPI dashboards are accessible to both business owners and engineering.

Post–go-live controls

- Weekly review cadence is scheduled (exceptions, model updates, process drift, backlog).

- Audit evidence is retained (requests, tool calls, outputs, approvals).

- Security monitoring flags prompt injection attempts, sensitive data leakage patterns, and anomalous tool usage.

- Continuous improvement loop exists (feedback incorporated into workflow/prompt/tool changes).

Governance and compliance considerations mapped to DDD

This table makes it easy to explain to executives how governance becomes “part of delivery,” not a separate committee.

| Governance element | Where it lives in DDD | Evidence alignment |

|---|---|---|

| Governance as cross-cutting function | All phases, anchored in Discovery | NIST frames GOVERN as cross-cutting across lifecycle functions |

| Requirements + oversight definition | Discovery | NIST MAP requires requirements elicitation and oversight process definition |

| Measurement + evaluation | Development + Delivery | NIST MEASURE emphasizes validation and tracking risks over time |

| Operational risk treatment | Delivery | NIST MANAGE includes response/recovery and ability to deactivate systems |

| Management system framing | Program-level | ISO/IEC 42001 positions integrated lifecycle governance from risk assessment to treatment |

Cost and time estimation templates

These templates are intentionally simple, so you can use them in discovery proposals without overfitting.

Template A: DDD effort allocation worksheet

| Phase | Allocation | Typical cost drivers | Estimation inputs |

|---|---|---|---|

| Discovery | 30% | Stakeholder workshops; process mapping; data/integration assessment; risk review | # workflows, # variants, # systems, compliance level |

| Development | 40% | Orchestration build; tool integration; RAG; HITL UI; testing & evaluation | # integrations, # tools, latency/SLA targets, security controls |

| Delivery | 30% | Deployment; monitoring; training; change mgmt; stabilization; hypercare | # user groups, rollout complexity, support coverage window |

Template B: Line-item cost model (fill-in)

| Line item | Unit | Quantity | Rate | Subtotal |

|---|---|---|---|---|

| Discovery workshops + process mapping | day | |||

| Data/integration assessment | day | |||

| Risk & impact assessment | day | |||

| Orchestration engineering | day | |||

| LLM + retrieval engineering | day | |||

| HITL UI / work queue | day | |||

| Testing + evaluation | day | |||

| Deployment + monitoring setup | day | |||

| Training + SOP updates | day | |||

| Hypercare + bug fixing | day | |||

| Total |

A practical way to explain this model to clients is: the “unseen” work (Discovery and Delivery) is where value realization and risk control happen—consistent with industry evidence that scaling depends heavily on adoption practices, governance, and people/process readiness.

Adoption and measurement plan

McKinsey’s survey evidence is blunt: tracking well-defined KPIs and establishing a road map are among the most impactful adoption practices, yet most organizations do not do them consistently. Treat adoption as a planned system, not a hope.

A concise adoption plan that fits DDD:

Measurement design (Discovery)

- Define a KPI tree (cycle time, error rate, throughput, compliance breaches, cost per transaction).

- Capture a baseline and a target state.

- Define “quality gates” and acceptable failure budgets (what happens when the agent is uncertain).

Operational measurement (Delivery)

- Stand up dashboards aligned to business owners (not just technical logs).

- Run a phased rollout with comparisons (pilot group vs control group where possible).

- Add a feedback mechanism tied to workflow improvement (user flags → triage → fix → re-measure).

Adoption mechanics (Delivery)

- Role-based training and “what changed” SOP updates (mapped to job roles).

- Communication plan showing value created (explicitly cited by McKinsey as a common adoption practice).

- Governance reinforcement: transparency, human oversight, and intervention paths.